Granted this first effort at only 3.4 sp TFLOP is not enough for real AI work. But its a hella start for such a small form factor. 2 doublings later - about 10 years in computer time, and it will be blasting 20TFLOP on chip, enough for simulated brains at a low level. and that would be amazing. A brain on EVERY notebook and tablet computer. It's hard to fathom.

In that time we need to walk away from Google's idiot video matching AI architectures and move to more dynamic neural cubes which implement Edelmen population dynamics, Layers with spiking triggers between them, Pribramic holographic principles, and dynamic synaptic growth. The first library to manage this JOONN (java object oriented neural network) I am currently developing now as part of the Noonean Cybernetics core technology. But eventually it will be open sourced.

The key point is that image matching is NOT brain simulation nor is it real cognition. Cognitive Science says to march closely with what is being done in nature. Yes there are still things we have to add as we do not have such huge levels of complexity and scale in our initial efforts.

We def are waiting for the parallel chip native java extensions so we can do this work in higher order java and still be hyper efficient and parallel at the chip level. don't hold your breath, their translative libs are junky and that's simple stuff. It probably have to come from Oracle's java core team.

AMD needs to morph off a neural network chip design branch, and develop 3d chipsets or at the very least stackable designs which are more cube based and not long cards designed for the data center. We need cube chips (which they showed in their frontier announcement) which are about 4" on a side and can deliver 100 TFLOP while only using a minimal amount of power. We aint there yet. But theres no reason we couldnt be in six years with hard effort. Also the memory amounts are far too small. Even a frontier card only has 16GB of HBAM while in reality to do large neural simulations say 8B neurons (a 2G cube, 2 giavellis being 2048 neurons on each side of a cube, operated each at 100hz to process. sorry I invented the measure because there was none existing). Well with 8B neurons, we would need something on the order of 1TB of HBAM. As I said, a long way to go. We can cheat this with two strategies, going with smaller interconnects and data stores per neuron, or using a temporary load scheme which slows everythin down. But until the hardware is ready there is at least a way to test complex architectures.

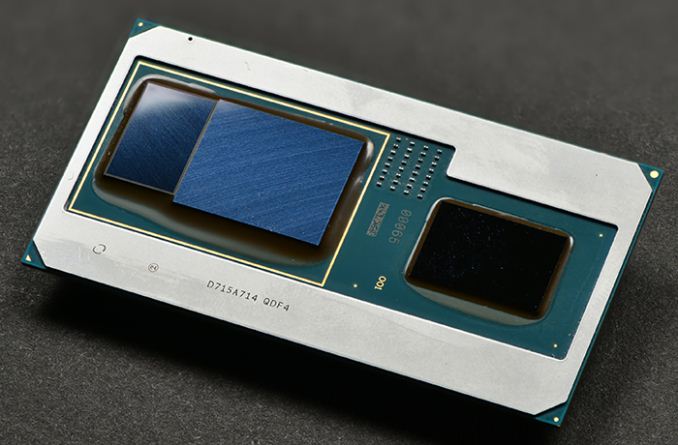

So yay for Intel and AMD. Now they just need to hire a real neural cognitivive scientist to tell them what they really need to be building, not these cards for faster games, but cubes for faster BRAINS!

No comments:

Post a Comment